Category: Professional practice

Category: Professional practice |

| Teaching and Learning Forum 2014 [ Refereed papers ] |

Bev Ewens, Lesley Andrew and Rowena Scott

Edith Cowan University

Email: b.ewens@ecu.edu.au, l.andrew@ecu.edu.au, r.scott@ecu.edu.au

This paper describes the development and implementation of a standardised moderation process within the School of Nursing and Midwifery at Edith Cowan University in Western Australia. This quality improvement initiative was the result of collaboration between two nursing course coordinators and a Centre for Learning and Development academic. The school employs a large team of sessional tutors who provide marking support to the unit coordinators. The purpose of this initiative was to standardise the marking of assessments across this team and to enrich the quality of assessment. The process was informed by best available evidence and underpinned by university guidelines and policy. The process was implemented in 2013 and initial indications were that it was well received by academic staff. However, retrospective evaluation revealed low implementation rates by academic staff. Potential reasons for this lack of engagement have been postulated, however, a prospective study will be conducted in 2014 to determine the contributing factors in order to improve engagement with this process.

The initial review of current moderation practices within the School's teaching context revealed the importance of the development of standardisation procedures. This context refers to the diverse range of staff, including significant numbers of sessional staff involved in the assessment process. The wide variety of both educational qualifications and experience held by these staff created the potential for variation in understanding and expectations of assessments. The aim of this initiative was to develop a whole of program approach and a sustainable community of practice for moderation within a quality improvement framework, which acknowledges the subjective nature of assessments (Smith, 2012).

Literature was reviewed to determine the best available evidence around assessment and moderation, in particular, resources that were part of an Australian Learning and Teaching Council (ALTC) Learning and Teaching Project (2008-2010) on moderation of fair assessment in all higher education programs (ALTC, 2012a). This review of the literature and of current practice in the School underpinned the development of the moderation process. The development process was one of collaboration with the School Executive and other academics. A pilot of the process was undertaken throughout the second semester of 2013. This paper describes the development and implementation of this pilot and preliminary findings.

Moderation is more than the checking of assessment marks; it is the quality assurance process which underpins the development of each item to ensure that the entire assessment process is fair, valid and reliable (ALTC, 2012a) enabling equivalence and comparability (ALTC, 2010). Moderation of student assessment may be summarised as a process aimed at ensuring that marks and grades are as valid, reliable and as fair as possible for all students and all markers (ALTC, 2012a). The ALTC project recommends a variety of practices as integral to the moderation process including consistency in assessment and marking; processes for ensuring comparability and quality control measures (ALTC, 2012a). In situations where a team of markers is involved a shared understanding of assessment requirements and standards is essential (Adie et al., 2013).

Quality in education programs has been described as meeting specified standards and being fit for purpose however, external quality evaluations are not particularly effective at ensuring quality improvement (Harvey & Williams, 2010). The heart of the holistic approach to moderation is continuous internal review (Lawson & Yorke, 2009). Moderation is a cyclical process which occurs throughout teaching and learning rather than a summative exercise at the end of the marking period (James, 2003) with activities that occur both before (i.e. quality assurance) and after all assessment (i.e. quality control). Continuous moderation of assessment can be applied across three stages: the design and development of assessments; implementation, marking and grading; and review and evaluation (ALTC, 2010, 2012a).

Grades awarded to students are essentially a symbolic representation of the level of achievement attained and grade integrity is defined as the extent to which each grade (or assessment mark) is strictly commensurate with the quality, breadth and depth of a student's performance (Sadler, 2009). Marking and grading in most disciplines is inevitably subjective (Hughes, 2011) but a systematic approach to identifying significant tacit beliefs may assist in reducing the effect on marker variation (Hunter & Docherty, 2011). This variation can lead to post assessment scaling of marks i.e. the adjustment of student assessment scores based on statistical analyses without reference to the quality of students' responses, (Office of Assessment Teaching and Learning, 2010). However, post-assessment scaling of marks should be avoided (ALTC, 2012b).

Students' marks are a representation of their academic achievement and as such require decisions around them to be justified and validated (Bloxham, Boyd, & Orr, 2011). Moderation as justification is typified by conversations about confidence in making judgments about allocations of marks, providing quality feedback as justification of these judgments to students (Adie et al., 2013) to prevent rather than in response to student queries. A transparent rigorous moderation process demonstrates accountability within the marking team (Adie et al., 2013). The underlying principle of quality monitoring should be the encouragement and facilitation of continuous improvement. ECU's approach to continuous improvement refers to the ECU Excellence Framework (Edith Cowan University, 2013) based on a cyclic model with four stages: Plan, Do, Review and Improve.

In SNM, undergraduate units with high enrolments (typically around 600) are partially taught and assessed by a team of sessional markers. The amount of tutoring and marking undertaken by sessional staff in any one unit ranges from none to the majority. Approximately thirty sessional staff are employed within the School at any one time across undergraduate and postgraduate practicum and theoretical units. Some of these staff teach and mark whilst others are employed solely to mark. Whilst the eligibility criteria to become a sessional staff member vary all sessional staff are encouraged to attend two professional development (PD) sessions: an orientation day conducted by the School each semester and also a PD day provided by the university, however these are not mandatory. Assessment and moderation of assessments as a topic are covered at both sessions. However, access to training and on campus meetings can be challenging for some sessional staff due to other work commitments and geographical location. The transient nature of sessional staff employment means the supply of experienced markers cannot be guaranteed on a semester by semester basis.

Communities of practice are not a new phenomenon and arguably have been in existence since people have been learning and sharing experiences through story telling (Lave & Wenger, 1991). Wenger (2002, p.29) described CoPs as consisting of three interrelated terms: "mutual engagement, joint enterprise and shared repertoire." Originally as a medium for learning, a CoP describes a group of people who share a common goal and interest (Roberts, 2006). Communities of practice may evolve spontaneously to address a need or be created purposefully to achieve a common interest or goal (Guldberg & Mackness, 2009). It is through the process within CoPs that members can develop both personally and professionally by learning from each other (Lave & Wenger, 1991). The authors aimed to develop a CoP for moderation of assessments in SNM to promote a shared culture of knowledge and beliefs around moderation.

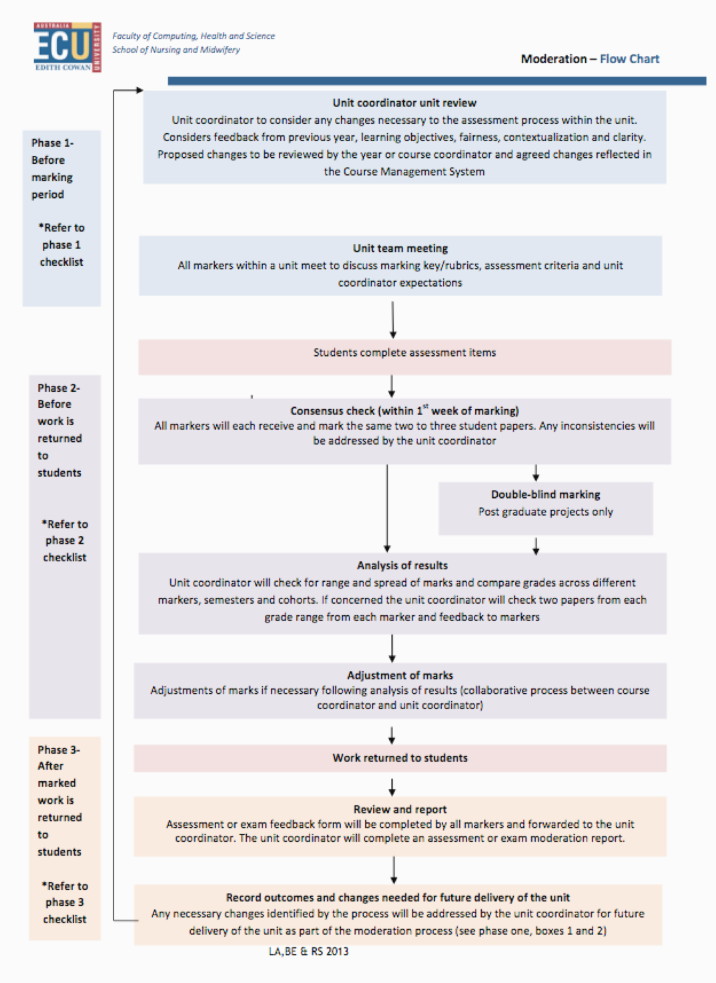

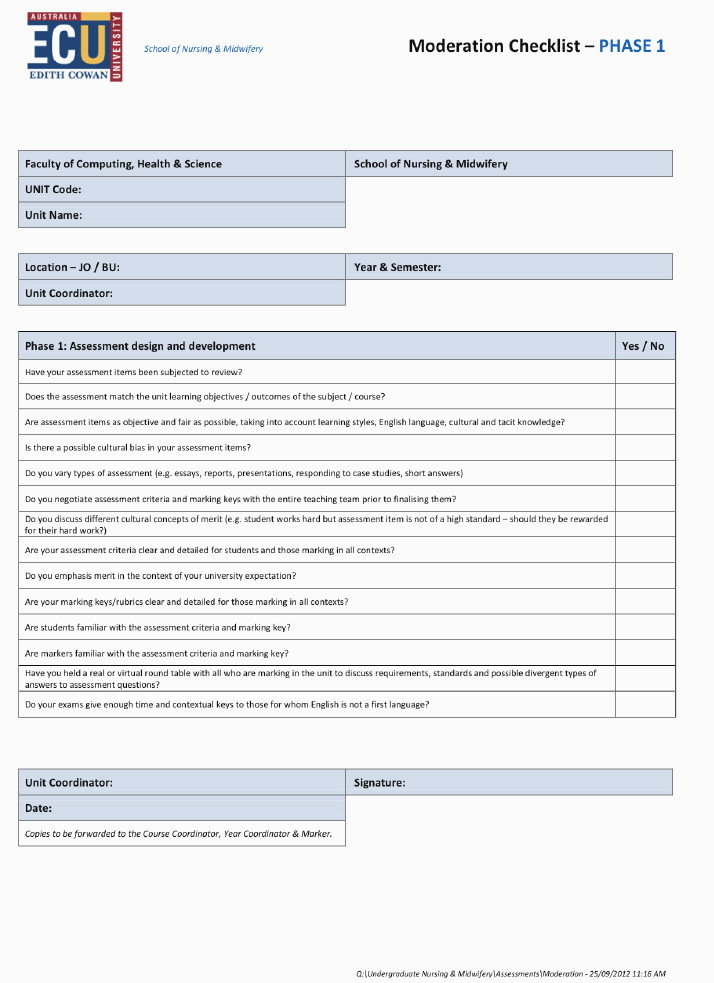

A clear process providing a system of achievable steps was developed to standardise the moderation process within the School. A visual representation of the new moderation process is outlined in a flowchart (Appendix 1). Guidelines were developed to support staff through the new process, which was divided into three phases, each with defined purpose underpinned by an ongoing, continuous and collaborative review with improvements to be incorporated into subsequent semesters. Roles and responsibilities of unit and course coordinators and sessional staff were made explicit within the guidelines.

Phase one: Unit coordinator's review of assessments before assessments

The purpose of phase one was to review all assessment items from the previous semester before the assessment was set and make amendments as required. Assessment items that may advantage or disadvantage any students are identified and amended. The unit coordinator ensures that assessment items match the learning outcomes; are as objective and fair as possible; take into account learning styles, English language, potential for cultural bias, cultural and tacit knowledge; and are varied across the unit and course. The coordinator confirms that there is adequate time for students to complete each task. Potential marking biases, cultural issues and subjectivity are identified and amended where necessary. In phase one, prior to commencement of teaching each semester, the unit coordinator checks that the marking guides, criteria and rubrics are clear, detailed and emphasise merit for students in all contexts (e.g. offshore or on different campuses) and for the entire marking team. Issues around standardisation of grades awarded and quality of feedback provided are checked as a response to feedback from sessional markers and students (including complaints, queries and appeals) within this phase. Decisions are made regarding necessary changes to the assessment items to improve quality and thereby increasing student satisfaction of the unit which would potentially reduce unit coordinator administration time from student grievances.

A phase one checklist provides prompts and suggestions to enhance assessment quality and effectiveness such as checking for objectivity, cultural responsiveness and alignment of assessments with unit outcomes (Appendix 2). Suggested amendments and their rationale are discussed with the year or course coordinator. Curriculum drift and the preservation of the range of assessments required to assess student competence and ability are considered prior to acceptance of any changes. Agreed changes are reflected in the unit outline via the Course Management System and are recorded in the unit plan for the following semester. Explanations of changes in the unit plan demonstrate to students that the coordinator is responsive to feedback.

The purpose of the phase one meeting prior to the marking period is for all markers to share their expectations and understandings about the assessments and marking criteria. The unit coordinator has the responsibility of ensuring agreement of standards and consistency of marking so this meeting should reduce marking inconsistencies. This meeting may be face-to-face or virtual considering geographical location and cross campus teaching, especially for sessional markers. Ideally the unit coordinator sends all documents and focus questions to the markers well before the meeting enabling time for markers to identify areas that may require clarification and discussion.

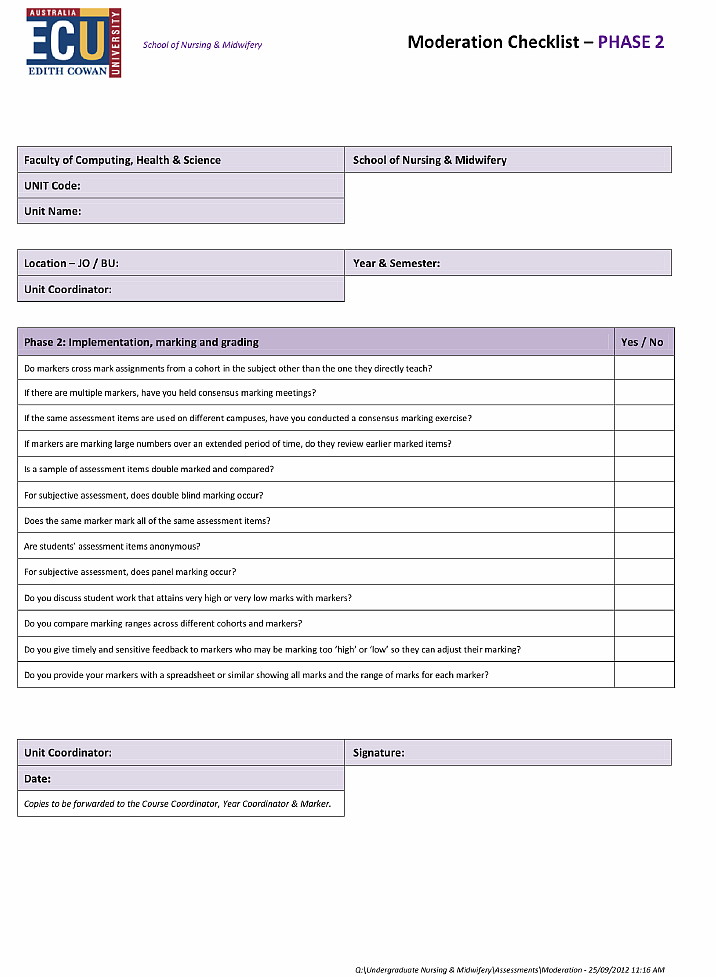

Phase two: During the marking period before work is returned to students

Phase two begins with a consensus check as early as possible during the marking period. The purpose is to ensure both consistency of marking and feedback to students. Ideally, this phase is undertaken each semester irrespective of any changes in the marking team or to assessments and marking guides. The process of the consensus check involves the unit coordinator circulating the same two to three student papers to all markers who mark them individually and return them, ideally within 48 hours. The unit coordinator tabulates the marks, notes any variation between markers and also notes particular questions and answers that demonstrate inconsistency. Any necessary adjustments identified from the review of these marked papers are communicated to the marking team including clarification of understandings and the addressing of marking inconsistencies and feedback quality. A list of possible standardised feedback comments (such as Quickmarks used within Turnitin) may be developed and shared within this process. A phase two checklist (Appendix 3) is also supplied at this stage which contains trigger questions regarding best practice in marking and moderation (such as comparing ranges of marks across tutor groups and throughout the marking time period for individual markers). Once work is marked and returned to the unit coordinator for distribution to students, an analysis of results between markers, campuses and delivery modes is undertaken. Should inconsistencies be identified, the unit coordinator should ensure s/he second marks a range of papers across each grade including fails (recommended two papers from each grade from each marker). Further checks the unit coordinator should make within this phase are the arrangement of double blind marking of post graduate projects.

To ensure these moderation checks are not increasing unit coordinator workload, extra and second marking expectations should be recognised and accounted for in the semester academic workload model. It is envisaged that undertaking the first two phases of the process will reduce the need for any post-assessment scaling of marks which requires approval by the course coordinator in consultation with the program director.

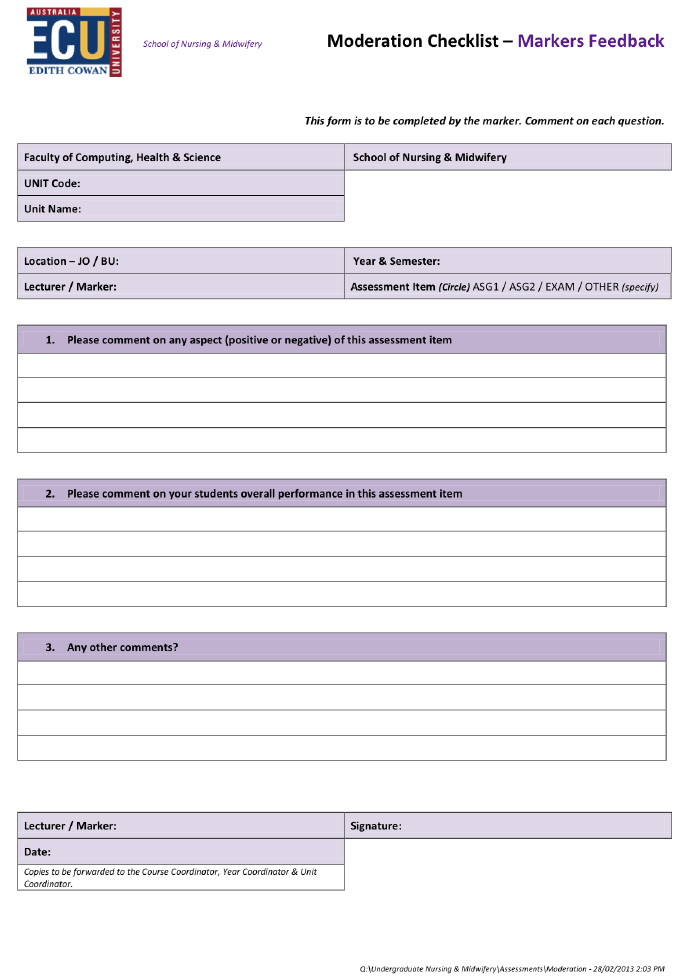

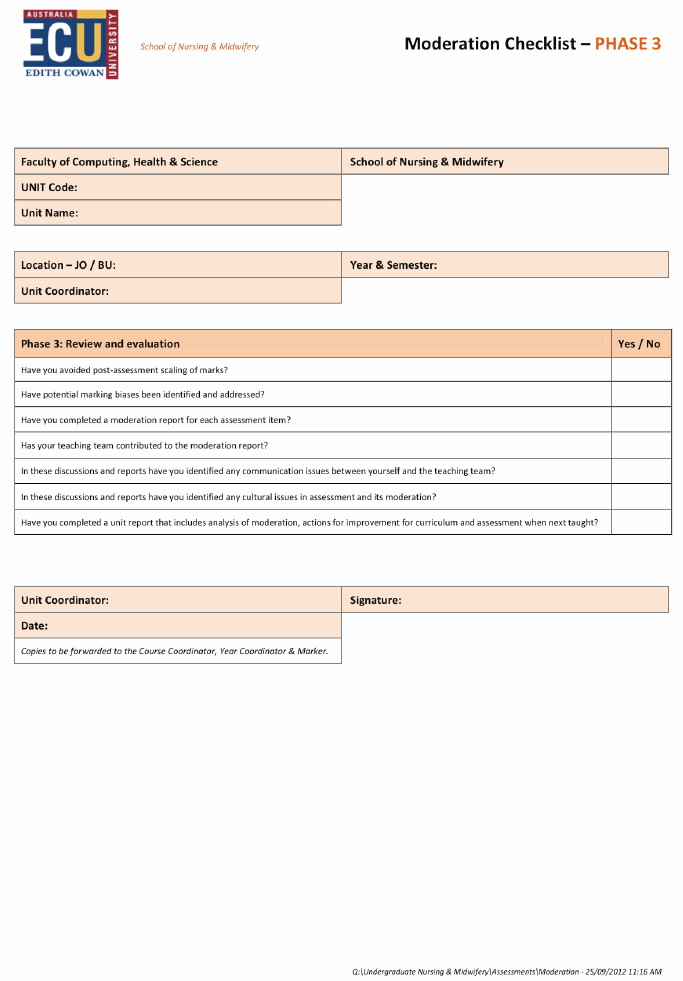

Phase three: Review and report

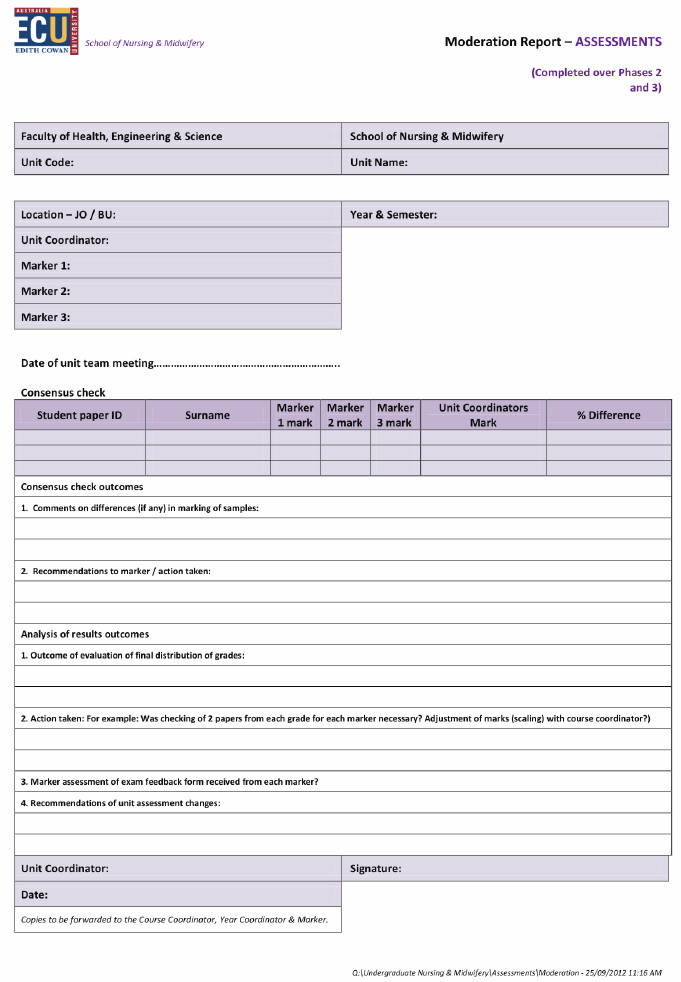

Phase three occurs after marked work is returned to students. The purpose of this stage is to review and report on the entire process to inform future improvements. A record of the process is necessary to retain transparency, enable continuous improvement and enable retrospective audit to be undertaken. Each marker completes a feedback form indicating strengths, weaknesses and suggestions for improvement relating to the assessment they have marked (Appendix 4). An assessment or exam moderation report is completed by the unit coordinator and shared with the course coordinator (Appendix 5). As with the previous two phases, a checklist to support this phase has been developed (Appendix 6).

In order to provide a clear and easy to follow guide for staff, the guidelines, flowchart, checklist and feedback forms were made available to all markers via the School's staff Blackboard site. The process was also showcased across a number of fora throughout second semester 2012. Following this period of discussion the process was implemented in semester one, 2013 across all theory units in both undergraduate and postgraduate programs.

Engagement with the process

From the email replies and moderation report forms submitted, levels of engagement in the process were ascertained. Three of the twenty-four unit coordinators (8%) indicated that they had engaged with the process. Various reasons for non-engagement were supplied by the remaining twenty-one staff. Seven (33%) responded that they were not aware of the process, three (14%) continued to moderate using their own system and one (5%) had made the decision not to comply as she was making significant changes to the unit in the following semester. There was no response from ten (40%) of academic staff (Figure 1).

Figure 1: Reasons given for non-engagement

Analysis of the data from the moderation reports completed by the three complying unit coordinators was divided into indication of holding a unit team meeting, consensus meeting outcome, analysis of results outcomes and changes needed to assessments indicated by the process.

Unit team meeting

All three unit coordinators held a meeting prior to the marking period including all markers within the unit marking team.

Consensus check

All three unit coordinators conducted a consensus check within the first week of the marking period in which marks were reviewed from two to three papers for assessment items across all markers and percentage difference in marking was noted and recommendations to marker recorded (Table 1).

| Markers | Assessment 1 variation | Assessment 2 variation | Recommendations |

| Marker 1 | 8% | 5% | None |

| Marker 2 | 7% | 5% | None |

| Marker 3 | 12% | 15% | Consensus discussion undertaken |

| Marker 4 | 6% | 7% | None |

| Marker 5 | 2% | 4% | None |

| Marker 6 | 9% | 4% | None |

Analysis of results

All three unit coordinators indicated they conducted an analysis of marks awarded across all markers and assessment items prior to marks being released to students. No scaling of marks was deemed necessary by any of the three unit coordinators.

The three staff who did engage with the process returned moderation report forms for the units they coordinated. From an analysis of these forms it was evident all three phases of the process had been followed. The outcomes of the process were positive, with only one staff member indicating the necessity to work with a marker in the consensus period to adjust their marking and none of the staff required scaling of marked work.

The identification of factors which may have influenced non-engagement with the process were beyond the scope of this project. However, possible reasons for lack of uptake of the process may include genuine lack of awareness or conscious decisions not to engage. This may have been due to concerns about increased workload, uncertainty of correct procedure and poor understanding of the importance and potential benefits to staff and students. Furthermore, experienced academics have been found to have some reluctance to share their processes of decision-making about assessment marks with new academics which may also have been a contributory factor for staff working with sessional staff in marking teams (Garrow & Tawse, 2009).

A three phased continuous approach was developed and a flow chart designed as a diagrammatic representation of the entire process. Prior to the implementation of this initiative widespread discussion was undertaken with the academic staff including written and oral communication at staff meetings. The development of the moderation process was an example of a successful collaborative approach between academic staff in a School and CLD within the University. The initial uptake of this process among academic staff in the School was less successful however with disappointing numbers engaging. Whilst the positive outcomes for those staff who did utilise the process are encouraging, further investigations into reasons for non- engagement are expected to support the refinement of the process and its future implementation within the School.

ALTC (2010). Moderation for fair assessment in TNE Literature Review. http://resource.unisa.edu.au/course/view.php?id=285&topic=1

ALTC (2012a). Assessment moderation toolkit. http://resource.unisa.edu.au/course/view.php?id=285&topic=1

ALTC (2012b). Moderation checklist. http://resource.unisa.edu.au/course/view.php?id=285&topic=1

Bird, F. & Yucel, R. (2010). Building sustainable expertise in marking: Integrating the moderation of first year assessment. In Proceedings ATN Assessment Conference, University of Technology Sydney. http://www.uts.edu.au/sites/default/files/Bird.pdf

Bloxham, S. (2009). Marking and moderation in the UK: False assumptions and wasted resources. Assessment and Evaluation in Higher Education, 34(2), 209-220. http://dx.doi.org/10.1080/02602930801955978

Bloxham, S., Boyd, P. & Orr, S. (2011). Mark my words: The role of assessment criteria in UK higher education grading practices. Studies in Higher Education, 36(6), 655-670. http://dx.doi.org/10.1080/03075071003777716

Edith Cowan University (2013). ECU Excellence Framework. http://www.ecu.edu.au/GPPS/policies_db/policies_view.php?rec_id=0000000391

Guldberg, K. & Mackness, J. (2009). Foundations of communities of practice: Enablers and barriers to participation. Journal of Computer Assisted Learning, 25(6), 528-538. http://dx.doi.org/10.1111/j.1365-2729.2009.00327.x

Harvey, L. & Williams, J. (2010). Fifteen years of quality in higher education. Quality in Higher Education, 16(1), 3-36. http://dx.doi.org/10.1080/13538321003679457

Hughes, G. (2011). Towards a personal best: A case for introducing ipsative assessment in higher education. Studies in Higher Education, 36(3), 353-367. http://dx.doi.org/10.1080/03075079.2010.486859

Hunter, K. & Docherty, P. (2011). Reducing variation in the assessment of student writing. Assessment & Evaluation in Higher Education, 36(1), 109-124. http://dx.doi.org/10.1080/02602930903215842

James, R. (2003). Academic standards and the assessment of student learning: Some current issues in Australian higher education. Tertiary Education and Management, 9(3), 187-198. http://dx.doi.org/10.1080/13583883.2003.9967103

Lave, J. & Wenger. E. (1991). Situated learning: Legitimate peripheral participation. Cambridge, England: Oxford University Press.

Lawson, K. & Yorke, J. (2009). The development of moderation across the institution: A comparison of two approaches. Proceedings ATN Assessment Conference, RMIT University. http://emedia.rmit.edu.au/atnassessment09/sites/emedia.rmit.edu.au.atnassessment09/files/180.pdf

Office of Assessment Teaching and Learning (2010). Developing appropriate assessment tasks. In Teaching and Learning at Curtin 2010 (pp. 22-44). Perth: Curtin University. http://otl.curtin.edu.au/local/downloads/learning_teaching/tl_handbook/tlbookchap5_2012.pdf

Orr, S. (2007). Assessment moderation: Constructing the marks and constructing the students. Assessment & Evaluation in Higher Education, 32(6), 645-656. http://dx.doi.org/10.1080/02602930601117068

Roberts, J. (2006). Limits to communities of practice. Journal of Management Studies, 43(3), 623-639. http://dx.doi.org/10.1111/j.1467-6486.2006.00618.x

Sadler, D. R. (2009). Grade integrity and the representation of academic achievement. Studies in Higher Education, 34(7), 807-826. http://dx.doi.org/10.1080/03075070802706553

Salamonson, Y., Halcomb, E. J., Andrew, S., Peters, K. & Jackson, D. (2010). A comparative study of assessment grading and nursing students' perceptions of quality in sessional and tenured teachers. Journal of Nursing Scholarship, 42(4), 423-429. http://dx.doi.org/10.1111/j.1547-5069.2010.01365.x

Smith, C. (2012). Why should we bother with assessment moderation? Nurse Education Today, 32(6), e45-e48. http://dx.doi.org/10.1016/j.nedt.2011.10.010

The Scottish Government (2011). Curriculum for excellence: Building the curriculum 5: A framework for assessment. http://www.educationscotland.gov.uk/Images/BtC5_assess_tcm4-582215.pdf

Wenger, E. (2002). Cultivating communities of practice: A guide to managing knowledge. Boston, MA: Harvard Business School Press.

| Please cite as: Ewens, B., Andrew, L. & Scott, R. (2014). Everything in moderation: The implementation of a quality initiative. In Transformative, innovative and engaging. Proceedings of the 23rd Annual Teaching Learning Forum, 30-31 January 2014. Perth: The University of Western Australia. http://ctl.curtin.edu.au/professional_development/conferences/tlf/tlf2014/refereed/ewens.html |

© Copyright 2014 Bev Ewens, Lesley Andrew and Rowena Scott. The authors assign to the TL Forum and not for profit educational institutions a non-exclusive licence to reproduce this article for personal use or for institutional teaching and learning purposes, in any format, provided that the article is used and cited in accordance with the usual academic conventions.